Small guide of best practices for server monitoring

The best practices for server monitoring begin much before the moment at which we choose or deploy a tool. It’s not about fixed guidelines, rather a way of working and understanding how to use a monitoring software. All this can be applied to any software, be that Tivoli, OpenView, Spectrum, Zabbix, Nagios, Pandora FMS or ZenOSS.

Some monitoring tools will be more flexible and allow the process to be easier to apply and others will force us to do things their way, stopping them from adapting to our philosophy. Throughout our many years of experience with different types of companies working with different applications, we’ve created a small guide for good server monitoring practices, an idea we hope will help you in your daily work.

Phase 1. Identifying issues when they happen

Identify your assets

This includes all that which can be monitored. You should establish a hierarchy since there are relations between different items. For example, the relation between key items such as databases and the systems they feed. A failure in the DB will affect everything else, and it’s just one of the things you should bear in mind.

Identify what needs to be monitored and what doesn’t

How is this done? by establishing priorities. Add to that list a new column that is labeled ‘priority’. This will help you start since there is a chance that hundreds of items that need monitoring will come up. You should begin by what’s really critical or high priority.

If you have a security policy, you can “cannibalize” that list since on it you’ll find things as important as business databases, backups and critical infrastructure systems. All these items should be the first to be monitored.

Classify your assets

Once you have the list and a priority field for each item, focus on critically importan items and those related to them. For example, a critical database will depend on a base system, that will at the same time have memory, hard drives and a CPU. All these items can be considered critical because of their “direct relation” with the main item.

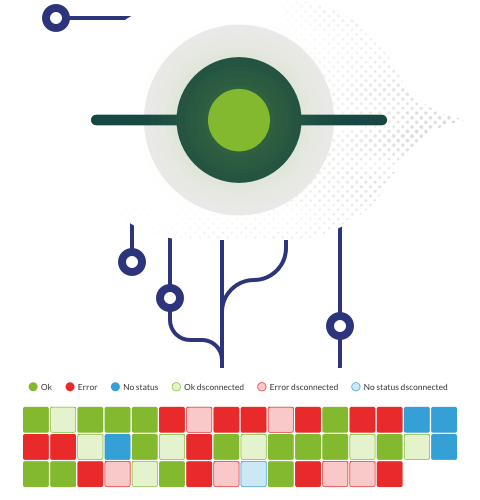

You can create an item hierarchy that will allow you to further understand how they are related amongst themselves, for example:

Translated into something purely technical, this could be written as:

● Accessible service verification (TCP port or WEB transaction).

● Application process that is active, RAM/CPU resources.

● CPU resource consumption from the base OS, amount of available RAM on the base OS and available disk space on the base OS.

● General device status: load average, network traffic…

● Basic device connectivity (ping)

This should be grouped into a single item so that a “simple glance” will allow you to easily view the necessary information. There are many ways to group this information: according to service, technology or origin (node/agent), everything will depend on whether the service is more or less complex and forms part of cluster or not. In any case, each application has different ways to do this. On Pandora FMS it can be done using services, groups or tags.

Define what to do when there is a problem.

This point usually passes by unnoticed and it’s essential to having the best server monitoring practices. What good is it if we detect problems, even before they occur, if we don’t notify them efficiently? Monitoring for a complex environment can be a very long process, even using an exception-based management system (event-based management) we suffer the risk of not identifying urgent issues quick and efficiently.

We already have a list of high priority services, and the items they include, the next step in our best practices for monitoring is that of identifying a responsible person that can act quickly when a problem occurs. Here we can choose the notification method (email, SMS, emerging window in the app) and the degree of scaling, based on the item affected in the service, or how recurrent the alert is. In summary, we’ll notify an operator when the service’s base system CPU is overloading, and in case that person doesn’t reply we’ll send an SMS alert to the person responsible for the service.

Categorize alerts

It’s very important to define which alerts we want to unveil and their category, with the goal to avoid alerting users unnecessarily, and so our support team knows what priority to apply to each type of alert. At first we could classify our alerts into the following groups: Critical, Warning and Message.

At this point we’ve already gone over three key ideas: numbering the assets, classifying services and priorities and defining who will be responsible and their communication methods. All this is done using a simple spreadsheet so, up until now, all these good practices for monitoring are actually useful for any monitoring tool. Dedicating time to doing this before applying the monitoring process will ensure the following:

- It’ll avoid overseeing the monitoring of relevant items on our systems. This means that when there’s any issues we can be sure that nothing really bad can happen without us being aware of it. This is one of the most important things, since it’ll allow us to “trust” our own monitoring. There is nothing worse than something bad happening and realizing that it was our fault for not monitoring it.

- When something bad happens, we’ll have data pertaining to the issue that is accessible and easy to interpret because we decided to retrieve information from the entire service and not do it in an isolated manner. This will help determine the cause of the problem (root cause analysis) in a natural way, defined by ourselves, independent from the supposed magic some developers offer.

- When a problem occurs, the involved parties will already be implicated and informed. We won’t waste time informing about the issue, rather we’ll work directly on a solution.

- Offer only the necessary information. This is especially important considering that if we have an entire screen filled with red icons, mixing irrelevant alerts with critical alerts, it’ll take us a long time to determine the origin of the problem and our answer will not be as quick or efficient. Excessive information can be even more harming than the lack of it.

Once a work method has been defined, this method can be applied to deconstruct the main issue (the entire organization’s monitoring) into parts, like any competent engineer would do: we can do this by services, priority, technology, departments, geographic locations, etc.

phase 2. Identifying problems before they happen.

Once we have the basic idea down–identifying without a shadow of a doubt when something wrong happens–in a second phase we can face something much more difficult: determining when a problem is near. This feature, along with the one meant to detect the cause of an issue automatically and the one meant to configure monitoring tools automatically (smart thresholds, dynamic monitoring, event correlations, big data monitoring, etc.) are some of the most sought out features on any monitoring software product.

In our search for having the best server monitoring practices we must be very wary of false positives or negatives, which will start to come up when we allow the system to interpret the data. These results can lead us to misinterpreting a complex situation and take the wrong decisions in turn. All operators develop a basic instinct with time, based on their knowledge of whats normal and what’s not, they cannot say that something is wrong, but they can have the intuition that something is not right.

With this we want to insist on the fact that no one yet has achieved total automation and we always recommend our users and customers to think calmly before making a decision, and not to gamble to heavily on extreme automation, which can lead to different mistakes that will only come out when we have a problem in our installations and it may be too late to fix it by then.

Monitoring by intuition is a term that hasn’t been heard yet, not even from Gartner analysts, but it’ll all come around.

What does intuitive monitoring consist of?

There are two ways of going along with it: the pseudo automated way or the purely visual way. In the first one, we’ll define small alerts that advise us when something leaves the “normal” operational thresholds. This doesn’t mean that they enter into “harmful” or error thresholds, simply they go into values that are different to what is contemplated as “normal”. For this we must create an alert category, as we mentioned in the first phase, that leaves no margin for misunderstanding that these abnormalities are not an issue, rather just something suspicious, erasing the concept of “criticality” in them. This is meant so in case there are many events of all types, these can be hidden from the general view with ease if necessary.

The other way is to create dashboards or displays (each tool has its own way to label it) that have to serve the purpose of putting up a group of real time graphs on a really big screen, in order for all people to have the same information. An operator that is always looking at the same displays, in the same order, with time develops the ability to tell when something isn’t right.

The necessary tools

Without getting into specific applications, what will be discussed here are features that are essential at the time of applying any useful monitoring processes for an organization that takes the operation seriously.

Some indispensable items for any software that claims to give value are:

● Alerts. They must allow for scaling, include item groups (correlation) and allow users to define complex tasks (apart from sending an email or SMS notification). Now that many organizations work with collaborative tools (such as Slack or Mattermost), the ability to insert an event into a group, including a graph and a description of the issue, along with a direct link to the monitoring scheme, allows for a much quicker response than a simple SMS alert would.

● Graphs. Graphs should be a tool, not something static. This means that they have to be able to be filtered, pressed, they must be able to be combined dynamically with other data series, show the detailed evolution throughout large periods of time which can be compared to values in similar intervals from prior months, etc. Graphs are the main source of numerical analysis we have available. A graph provides a lot of information in a very easy to interpret way. A system with static graphs can be very aesthetically pleasing, but it’s not useful.

● Logs. The following step when approaching an issue or suspected problem is to analyze raw information. This can simply be done through data charts or raw data that’s being introduced to the system (log registries). In case this data is missing, we are then limited to graphs and events.

● Direct access to the source. This exceeds what the monitoring system does in general but, if we have precise information (alerts), data strings that help us understand the behaviour (graphs) and precise data that helps narrow down our analysis (logs), the next logical step is to directly access the system that generates all that information. The fact that a monitoring tool allows us to access that system easily simply closes the cycle.

We hope this article on good server monitoring practices has given you more of an idea on how to carry out a good monitoring process. For any doubts, comments or suggestions, don’t hesitate to contact us and we’ll be delighted to reply to your questions.

Pandora FMS’s editorial team is made up of a group of writers and IT professionals with one thing in common: their passion for computer system monitoring. Pandora FMS’s editorial team is made up of a group of writers and IT professionals with one thing in common: their passion for computer system monitoring.