Right, we start from the idea that measuring is a form of dominance over things.

In fact, we could say that you can’t measure what you don’t control.

That is why it is essential to deliberately choose what is measured (especially with our metrics).

Let’s get real. Not all metrics are useful metrics

Today we will talk about metrics in the context of an organization’s environment and goals. Therefore, measurements will always be related to:

- History data.

- Trends.

- Usage patterns.

- Typical lines.

- Common bases.

- Current values.

This context has always provided important and obvious benchmarks when comparing and analyzing an IT environment’s performance.

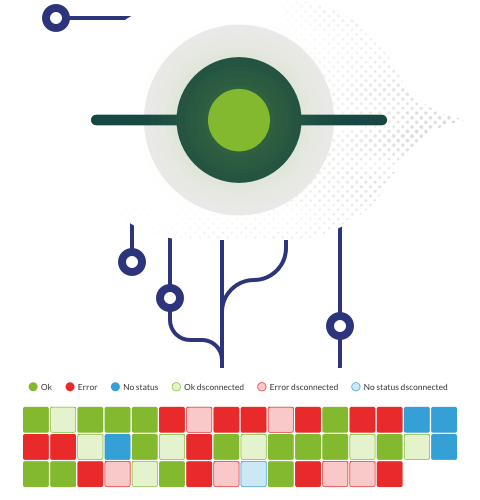

Thus you will determine “what is right”, “what is wrong” and what you just don’t care about.

Some metrics do not tell the whole truth

We know that availability is usually calculated by dividing the actual measured availability by the total possible availability of a certain interval.

0.9999 availability, for example, is considered a satisfactory result by most standards. Buuuuut, depending on when it takes place, that little downtime can be insignificant or important.

It is not the same if there’s inactivity between 2 and 3 in the morning for an online retailer or if it is at a time of great activity, near the end of the month or shortly before closing.

We are saying that the opportunity cost of each minute can range between tens of thousands of euros and hundreds of thousands of euros if it actually takes place just before holidays or during the period of greatest commercial activity.

With this we just intend to illustrate the relativism we live in and how crucial the context is to understand measures.

At a first glance we could say 99.99% is cool. However, it wouldn’t be so cool now if the 0.1% of application demand spikes and the 0.1% of downtimes match.

In this case, 99.99% uptime creates an over-optimistic image and may work against investigation of the underlying factors that may be causing potential performance issues or improper workload distribution.

In addition, a serious question arises:

(Serious voice).

Is the drop at times of absolute peaks of use a sign that the app is under-resourced, misplaced, or is it just an unfortunate coincidence?

Load spike failures have financial repercussions due to missed opportunities, but they also require a thorough examination of reliability, availability, network performance, and capacity KPIs, among others, to determine if any adjustments or fine-tuning are necessary.

Are there hidden costs?

Something is clear right here:

The use of automation should be compared to the service ticket and organization standard problem resolution times.

*In theory, at least in theory, open tickets should be solved faster the more automation is used.

Therefore, it is important for IT organizations to track service ticket average resolution time (MTTR), as well as the amount and effectiveness of automation being used in their workflows for:

- Configuration.

- Provisioning.

- Maintenance.

- Problem solving.

In addition, a metric will be needed more than ever to determine and control the value of automation within the organization.

But we want to make clear that, in terms of automation, more doesn’t have to mean better.

Self-service portals, incidents and service requests have an inverse relationship:

The more end users discover that the self-service portal meets their needs for datasets, analytics, access to services and resources, etc., the less frequent IT service requests will be.

This goes beyond simple MTTR reduction.

It also involves employing self-service portals to allow customers to complete the most common service requests without the need to create and close a service ticket.

If installed and maintained, this should lead to a wonderful world where there are less service tickets issued, happier and more productive users, and the possibility, even, of users suggesting changes, additions and extensions of self-service portals.

Let’s evaluate the history of metrics

There are too many management tools and utilities for complex, hybrid cloud-based environments, don’t you think?

Companies tend to get the better deal if they focus their search on a single comprehensive, unified and complete management platform.

They often reduce the number of management tools they use, while streamlining the management of their IT operations by performing such a transformation.

The result?

A type of “virtuous cycle” that leverages improved automation and visibility to expand opportunities for continuous optimization and raise the level of indicators used to assess the overall health of IT infrastructure.

Conclusion

The areas that will most profit from automation and self-service access will be revealed by establishing useful cost metrics that go beyond the cost of IT acquisitions, amortization and depreciation, as well as the cost of IT consumption, including software-as-a-service solutions (SaaS), Cloud resources, usage, and permanent virtual machine assignments.

As a result, you will identify objectives for constant and continuous optimization and improvements that will keep costs in the right direction.

Want to know more about the IT world?

Dimas P.L., de la lejana y exótica Vega Baja, CasiMurcia, periodista, redactor, taumaturgo del contenido y campeón de espantar palomas en los parques. Actualmente resido en Madrid donde trabajo como paladín de la comunicación en Pandora FMS y periodista freelance cultural en cualquier medio que se ofrezca. También me vuelvo loco escribiendo y recitando por los círculos poéticos más profundos y oscuros de la ciudad.

Dimas P.L., from the distant and exotic Vega Baja, CasiMurcia, journalist, editor, thaumaturgist of content and champion of scaring pigeons in parks. I currently live in Madrid where I work as a communication champion in Pandora FMS and as a freelance cultural journalist in any media offered. I also go crazy writing and reciting in the deepest and darkest poetic circles of the city.