How to monitor PostgreSQL

PostgreSQL is a very powerful and stable database management system (SGBD) that allows managing a very large amount of data. In its more than 16 years of active development by a selfless, altruistic and free community of developers, PostgreSQL has become a well-known SGBD to the level of other proprietary solutions.

In order to get the software working at peak performance and make a better use of your resources, it is essential to optimize the parameters of Progress. Optimizing is important, but optimizing without monitoring is completely useless. With a tool that monitors your systems, you will be notified on problems before they become a bigger issue. If an error occurs in your database, you will receive an email with all the information so you can solve it before it’s too late.

A monitoring system will let you know about when, where and why a problem occurred. With a good optimization and monitoring of ProgreSQL, this database will be suitable even for large companies.

All these things will provide you with a better user experience and reliability. It will even reduce the time spent on maintenance of PostgreSQL and, therefore, you will cut down on costs and time.

COLLECTION OF INTERESTING DATA

There are several tools from Linux / Unix that collect useful information about the impact of PostgreSQL in the system. We will talk about some of them below.

* ps

With command, which most of Linux / Unix systems includes, you can get information about the CPU usage, RAM PostgreSQL processes, the client connections to the server and its activity, among many other things.

For example, with a query as the one below:

ps aux | grep “postgres” | grep -v “grep”

We would get this information:

postgres 1289 0.0 0.3 51676 7900 ? S 11:31 0:01 /usr/lib/postgresql/9.1/bin/postgres -D /var/lib/postgresql/9.1/main -c config_file=/etc/postgresql/9.1/main/postgresql.conf

postgres 1303 0.0 0.0 21828 1148 ? Ss 11:31 0:00 postgres: logger process

postgres 1305 0.0 0.0 51676 1568 ? Ss 11:31 0:02 postgres: writer process

postgres 1306 0.0 0.0 51676 1324 ? Ss 11:31 0:02 postgres: wal writer process

postgres 1307 0.0 0.1 52228 2452 ? Ss 11:31 0:00 postgres: autovacuum launcher process

postgres 1308 0.0 0.0 22016 1432 ? Ss 11:31 0:00 postgres: stats collector process

The third and fourth columns show the percentage of CPU usage and RAM, respectively. Since a very high consumption of CPU and RAM can cause slowness and server crashes, it is important to pay attention to these two data.

Note that if data appear when performing this query, it means that the PostgreSQL server is active.

Do you want to know more about application monitoring?

Pandora FMS Enterprise is capable of monitoring the most popular applications and databases. Click here and find out…

* Vmstat:

With this command (included in most of the facilities of Linux / Unix systems) you can obtain data related to the common memory and SWAP, (at the entrance and exit), the system and the CPU. The first line always shows an average since the last reboot.

To get a view of the current data during an interval of time with a defined number of repetitions, you can perform a query like the one below, where the first variable shows the time in seconds that will measure each line, and the second variable shows the number of lines that will appear:

vmstat 1 5

It will return information like this:

procs ———–memory———- —swap– —–io—- -system– —-cpu—-

r b swpd free buff cache si so bi bo in cs us sy id wa

0 0 0 188972 179496 669392 0 0 24 27 525 461 1 1 96 1

0 0 0 188972 179496 669392 0 0 0 0 1449 1689 2 2 97 0

0 0 0 188972 179496 669392 0 0 0 0 2403 1605 2 2 97 0

2 0 0 188972 179500 669392 0 0 0 16 2041 2502 2 3 94 2

0 0 0 188972 179500 669392 0 0 0 0 1599 1868 1 1 98 0

The most useful information to the topic that concerns us is shown in the last column: “wa”. It shows the timeout for Input / Output operations. Since the system could “collapse ” due to an excessive disk access, it is very important to monitor it so it will not exceed a value of 15-20.

Check if the values of the columns “si” and “so” of the SWAP memory usage are 0. It would mean that the Ram memory of the server is saturated.

* netstat

This command displays information about the status of the network connections, so you can check the number of standby connections in the computer hosting the PostgreSQ, with a query like the one below.

netstat -ntu | grep “TIME_WAIT” | wc -l

A high number of “standby connections” may indicate problems with the server response as a result of other problems.

** Views and internal tables of PostgreSQL

PostgreSQL has a subsystem called Statistics Collector responsible for collecting and reporting information on the activity of the server. To deactivate Statistic Collectors and avoid performance losses, modify the parameters ‘ track_counts ‘, ‘ track_functions ‘ and ‘ track_activities ‘ on the file ‘ postgresql.conf ‘ . However, before deactivating it, you must assess whether it is worthwhile as this will remove the possibility of obtaining very useful data.

To access these data, you need SQL to perform several queries to some predefined views. Some of the most important ones are:

* pg_database

It saves information about the databases available. A database per row.

This table is very interesting because it will allow you to find out the size ( in bytes ) of all the databases. The ” pg_database_size ( oid ) ” returns the size of the database whose ID is passed as an argument . By performing a query on this table, it will show the size of each database, row by row, and you will only have to add the results to see if there is enough space left in the disk:

SELECT SUM(pg_database_size(oid)) FROM pg_database;

* pg_locks

* pg_locks displays information on the active locks in the server. It has one row for each lock, which display information about the type of lock and where it has occurred, etc.

As the SGBD allows multiple users to access at the same time, some of them may want to make changes on the same item that is being used by another user. To avoid this situation, the elements in use in a transaction must be locked. To find out the number of locked element, perform the following query:

SELECT COUNT(*) FROM pg_locks;

A high number of locks could decrease the server performance, because two or more processes could try to access the same resource and would have to wait for the resource to be unlocked.

* pg_stat_activity : it shows information about the server processes. It shows a row per process.

From this view you can get the number of users connected to the query:

SELECT COUNT(usename) FROM pg_stat_activity;

The number of concurrent users that can manage the system depend on the hardware and the connection of the machine where the server is located. It is convenient to perform stress tests to find out what that limit is and be alerted if the system is reaching it.

* pg_stat_database

It shows information about the use of databases. It has one row for each database, which shows the number of connected processes, commits and rollbacks. Furthermore, it shows information on the blocks read from the disk, the cache and the number and type of operations performed with rows of each one.

With this table you can find out, for example, if the cache memory is working properly. With the query below, you can get a usage rate of the cache with respect to the common:

SELECT SUM(blks_hit) / SUM(blks_read) FROM pg_stat_database;

The higher ratio, the greater speed when collecting data for the tables.

* pg_stat_bgwriter

It only has one row and shows data related to checkpoints and buffers from the background writer.

An advanced user able to interpret data can take advantage of this information by changing certain properties to improve the performance. For example, you can perform this query:

SELECT maxwritten_clean FROM pg_stat_bgwriter;

This query will return the number of times that the bgwriter has overwritten the maximum number of buffers allowed in that round, when a checkpoint has been performed. A low number is ok, but if the values are too high, you should increase the value ‘ bgwriter_lru_maxpages ‘ (default 100 ) in ‘ postgresql.conf ‘ or the command line to improve the performance .

You can also improve the performance by checking what external processes are forced to ask for more space many times in the buffers.

Use this query to check it:

SELECT buffers_backend FROM pg_stat_bgwriter;

To expand the space before a process needs to extend it, you can increase the values ‘ bgwriter_lru_maxpages ‘ and ‘ bgwriter_lru_multiplier ‘ , and reduce the value ‘ bgwriter_delay ‘.

Check out the rest of tables and functions here: http://www.postgresql.org/docs/9.1/static/monitoring-stats.html # MONITORING -STATS – VIEWS – TABLE (it will vary depending on the version of PostgreSQL ).

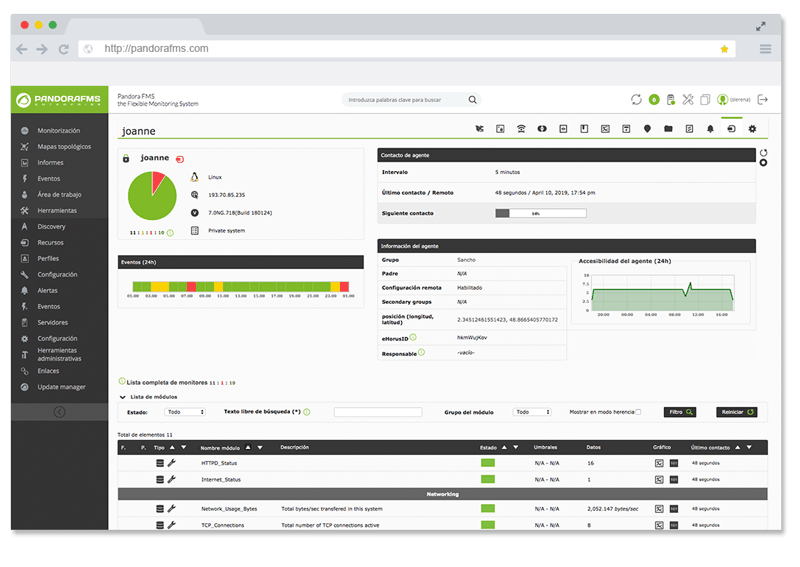

How to monitor PostgreSQL with Pandora FMS

Some users may prefer to perform checks manually to ensure the proper operation of your PostgreSQL server . However, for those who need to maintain a tight control of the operation and evolution of the system, a monitoring system is the perfect solution to increase the functionalities and possibilities of PostgreSQL.

The market offers a wide range of monitoring systems. As manufacturers and experts in Pandora FMS we strongly recommend Pandora FMS and its plugin for PostgreSQL to monitor PostgreSQL.

The first step to deploy the monitoring is to download and install Pandora FMS. Please, fin below the ISO image:

http://pandorafms.com/Community/download/

Once installed, install an agent on the machine where server cache of PostgreSQL is running. You can find many different Linux distributions agents at the following link:

http://sourceforge.net/projects/pandora/files/Pandora % 20FMS % 204.0.3 /

Since you can find all the information on how to install every process in the official documentation, we won’t explain it in this article. For further information on this process, click on this link:

http://wiki.pandorafms.com/index.php?title=Pandora:Documentation_en:Installing

Click on the link below to download the to monitor PostgreSQL.

http://pandorafms.com/index.php?sec=Library&sec2=repository&lng=es&action=view_PUI&id_PUI=553

To install the plugin , you just have to unzip the downloaded file and move the Pandora_PostgreSQL.conf Pandora_and PostgreSQL.pl files to the agent plugin folder installed on the PostgreSQL server to monitor. You can use the following commands:

# unzip Pandora_PostgreSQL.zip

# cp Pandora_PostgreSQL.* /etc/pandora/plugins

Once the plugin is in the right location, we have to configure it. Enter a user name and a password of a user with full permissions to log in from the terminal. You will also have to add the host address and specify a directory for temporary data. Once done, the plugin will be fully functional, as it includes a lot of checks by default.

You can add or delete checks from the configuration, or even customize the existing ones.

There are three types of checks: system checks, general queries about information of PostgreSQL and free queries. To remove one in particular, just delete or add a comment with a # at the beginning of each line of the check.

To add or customize a new check, firstly you need to understand how the data displayed here operates:

* System checks:

check_begin

check_pgsql_service

check_end

These checks are predefined and can not be added to new ones, but you can delete them or customize them as it will be explained later.

* General queries:

check_begin

check_name (Nombre del chequeo)

pgsql_column (Columna de la que se quiera extraer información)

pgsql_table (Tabla de la que se desea extraer información)

check_end

These checks will work just with numerical data. In that case, it will return the whole of all the data found in the specified table column.

* Free queries:

check_begin

check_name (Nombre del chequeo)

pgsql_sql (Consulta SQL a realizar)

check_end

These checks work like general queries when searching for numerical data. In case, the information you wish to gather is text-type, these checks will return the first text data found.

Each check should be between two lines: ” check_begin ” and “check_end “. It will have all the necessary data for execution indicated above and optional customization:

– post_condition :

It compares the result obtained when executing the module with the one indicated here. If it meets, ‘ post_status ‘, ‘ post_execution ‘ or both of them (if they are defined) will be executed.

Eg post_condition > 10

– post_status :

It switches the status of the check into the indicated one when the condition of ‘ post_condition ‘ is met. Ex: WARNING post_status

– post_execution :

Run the terminal’s order indicated before ‘ _data_ ‘ in the event that the condition of ‘ post_condition ‘ is fulfilled.

Eg post_execution snmptrap – v 1-c public 192.168.5.2 1.3.6.1.2.1.2 192.168.50.124 6 666 1233433 .1.3.6.1.2.1.2.2.1.1.6 i _data_

– data_delta : Specifying this property, the information obtained by the check will be treated as “delta ” , ie , reflects the increase to observe the rate of increase that was produced .

– module_type : it changes the data type that will return the check indicated.

Eg async_string module_type

Once setup is completed according to your needs, add the plugin configuration file of the Pandora FMS agent, which will be by default in the path “/ etc / pandora / pandora_agent.conf ” , so you can run it periodically. If the plugin files have been already added to the plugins folder , you just have to add this line :

module_plugin /etc/pandora/plugins/Pandora_PostgreSQL.pl /etc/pandora/plugins/Pandora_PostgreSQL.conf

After completing this process, Pandora FMS will have been successfully installed in your systems and you can be sure that you will be informed of any failures occurring in your systems before they become a bigger problem for your environment.

Pandora FMS’s editorial team is made up of a group of writers and IT professionals with one thing in common: their passion for computer system monitoring. Pandora FMS’s editorial team is made up of a group of writers and IT professionals with one thing in common: their passion for computer system monitoring.