Pandora FMS Engineering

Pandora FMS Database Design

Pandora FMS early versions, from 0.83 to 1.1, were based on a very simple idea: one data, one database insertion. This allowed the software to perform easy searches, insertions and other operations quickly.

Apart from all the advantages of this development, there was a drawback: scalability (quick growth without affecting or with little effect on operations and work routines). This system had a defined limit in the maximum number of modules it could support, and with a certain amount of data (more than 5 million elements), performance was affected.

MySQL cluster-based solutions, on the other hand, are difficult: Although they allow you to handle a larger load, they add some extra problems and difficulties, and also do not offer a long-term solution to the performance problem with large amounts of data.

Currently, Pandora FMS implements data compaction in real time for each insertion, besides performing data compression based on interpolation. On the other hand, the maintenance task allows to automatically delete data that exceeds a certain age.

Pandora FMS processing system stores only “new” data: if a duplicate value enters the system, it will not be stored in the database. This is very useful to keep the database reduced, and it works for all Pandora FMS module types (numeric, incremental, boolean and text string).

These modifications involve big changes when reading and interpreting data. In the latest Pansora FMS versions, the graphic engine was redesigned from scratch to be able to represent data quickly with the new data storage model.

Compaction mechanisms also have certain implications when reading and interpreting the data graphically: Currently, there is a graphical configuration menu that allows adding percentiles, real-time data, when events and/or alerts took place, in addition to other options.

Additionally, Pandora FMS allows the total disaggregation of components, so that the load of data file processing and execution of network modules in different servers may be balanced.

Other technical aspects of the DB

Throughout software updates, improvements were implemented in the relational model of Pandora FMS database. One of the changes introduced was indexing the information based on different types of modules. That way, Pandora FMS may access the information much quicker, because it is distributed into different tables.

It is possible to partition the tables (by timestamps) to further improve access to history data.

In addition, factors such as the numerical representation of timestamps (_timestamp_ UNIX format), speed up searches for date ranges, date comparisons, etc. This work has led to a considerable improvement in search times and insertions.

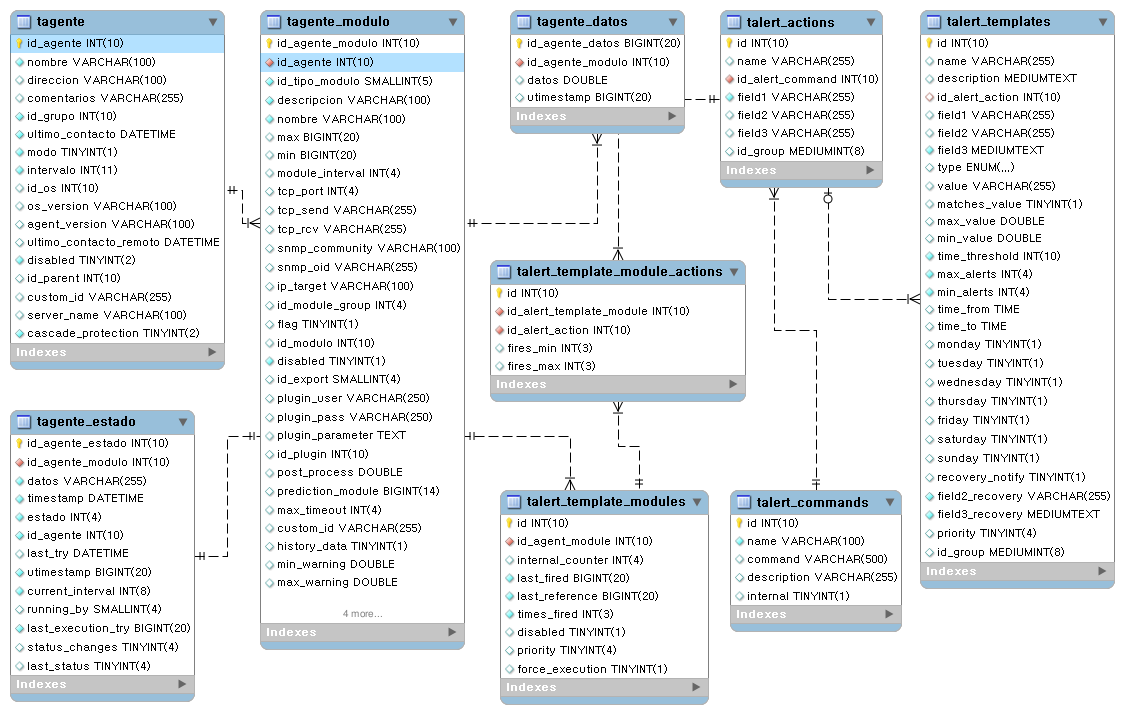

Main database tables

Real-time data compression

To avoid overloading the database, the server performs a simple insertion time compression. A piece of data is not stored in the database unless it is different from the previous one or there is a difference of more than 24 hours between the two of them.

For example, assuming an approximate 1 hour interval, the sequence 0,1,0,0,0,0,0,0,0,0,0,1,1,0,0,0 is stored in the database as 0,1,0,1,1,0. Another consecutive 0 will not be stored unless 24 hours go by.

Compression greatly affects data processing algorithms, both metrics and graphs, and it is important to keep in mind that the “gaps” caused by compression need to be filled.

Taking into account all the above, to perform calculations with the data of a module given an interval and an initial date, the following steps must be followed:

- Search for the previous data, out of the given interval and date. If it exists, bring it to the beginning of the interval. If it did not exist previously, there would be no data.

- Search for the next data, out of the given interval and date up to a maximum equal to the module interval. If it exists, bring it to the end of the interval. If it does not exist, the last available value should be extended to the end of the interval.

- All data is traversed, taking into account that a data is valid until a different data is received.

Data Compacting

Pandora FMS includes a system for compacting database information. This system is oriented to small and medium-size scenarios (from 250 to 500 agents and with less than 100,000 modules) that want to have an extensive information history but losing details.

The maintenance of Pandora FMS database that is executed every hour, and among other cleaning tasks allows to perform old data compaction. This compaction uses a simple and linear interpolation, as it is an interpolation, this causes details to be lost in this information, but it will still be informative enough for report and monthy or yearly graphs, etc.

In large databases, this performance could be quite costly in terms of performance and would have to be disabled; instead, it is recommended to go for the history database model.

History database

The history database is a feature used to store all the past information, which is not used in recent day views, such as data older than one month. This data is automatically migrated to a different database, which must be on a different server with a different storage (disk) than the main database.

When a graph or a report with old data is displayed, Pandora FMS will search the first days in the main database, and when it reaches the point where it migrates to the history database, it will search in it. Thanks to this, the performance is maximized even when accumulating a large amount of information in the system.

Advanced configuration

Pandora FMS default configuration does not transfer string text string type data to the history database, however if this configuration was modified and the history database receives this type of information it is essential to configure its purging, otherwise it will end up occupying too much, causing big problems and generating a negative impact in the performance.

To configure this parameter, a query must be executed right away in the database to determine the days after which this information will be purged. The table itelf is tconfig and the field string_purge. To set 30 days for the purge of this type of information, the following query would be executed right away on the history database:

UPDATE tconfig SET VALUE = 30 WHERE token = "string_purge";

The database is maintained by a script called pandora_db.pl, to check that the database maintenance is executed correctly, the maintenance script is executed manually:

/usr/share/pandora_server/util/pandora_db.pl /etc/pandora/pandora_server.conf

It should not report any errors. If any other instance is using the database, you may use option -f that forces execution ; with parameter -p it does not compact data. This is particularly useful in High Availability (HA) environments with history database, since the script makes sure to properly and orderly perform the necessary steps for said components.

Pandora FMS module statuses

When is each status established?

- On the one hand, each module has

WARNINGandCRITICALthresholds in its configuration.- These thresholds define the values of your data for which these statuses will be activated.

- If the module provides data outside these thresholds, it is considered to be in

NORMALstatus.

- Each module has, in addition, a time interval that will set the frequency with which it will obtain data.

- This interval will be taken into account by the console to collect the data.

- If the module has not collected data for twice its interval, the module is considered to be in

UNKNOWNstatus.

- Finally, if the module has alerts configured and any of them was triggered but not validated, the module will have the corresponding Alert triggered status.

Propagation and priority

Within Pandora FMS organization, certain elements depend on others, as it is the case of the modules of an agent or the agents of a group. This may also be applied to the case of Pandora FMS policies, which have associated certain agents and certain modules that are considered to be associated to each agent.

Such a structure is particularly useful for evaluating module statuses at a glance. This is achieved by propagating upwards in this organization the statuses, thus giving status to agents, groups and policies.

Which status will have an agent?

An agent will be shown with the most critical status of the statuses of its modules. In turn, a group will have the most critical status of the statuses of the agents that belong to it, and the same for the policies, which will have the most critical status of their assigned agents.

Thus, when you see a group with a critical status, for example, you will know that at least one of its agents has the same status. To locate it, you will have to go down another level, to the agents' level, to narrow the circle and find the module or modules causing the propagation of this critical status.

What is the priority of the statuses?

As the most critical status of the statuses is propagated, it must be clear which statuses take priority over the others:

- Alerts triggered.

- Critical condition.

- Warning status.

- Status unknown.

- Normal condition.

When a module has triggered alerts, its status has priority over all the others and the agent to which it belongs will have that status and the group that agent belongs to, in turn, will also have that status.

On the other hand, for a group, for example, to have normal status, all its agents must have such status; which means that all the modules of such groups will have normal status.

Color coding

Pandora FMS Charts

Graphs are one of the most complex implementations of Pandora FMS, they retrieve data in real time from the database and no external system (RRDtool or similar) is used.

There are several graph performances depending on the type of source data:

- Asynchronous modules. It is assumed that there is no data compaction. The data in the database are all real samples of the data, there is no compaction. It produces much more “accurate” graphs with no possibility of misinterpretation.

- String type modules. They show graphs with the data rate over time.

- Numerical data modules. Most modules report this type of data.

- Boolean data modules. They correspond to numerical data on monitors, PROC: e.g. Ping checks, interface status, etc. Value 0 corresponds to Critical status, and value 1 to “Normal” status.

Home

Home