High availability (HA)

Introduction

In critical and/or heavy-load environments, it may be necessary to distribute the load across multiple machines and have the security that if any Pandora FMS component fails, the system remains online.

Pandora FMS has been designed to be modular but is also designed to work in collaboration with other components and be able to assume the load of those components that have gone down.

Obviously, the agents are not redundant. The solution is to make critical systems redundant - regardless of whether they have Pandora FMS agents running on them or not - and thus redundantly monitor those systems.

You can talk about using High Availability (HA) in several scenarios:

- Data server.

- Network, WMI, Plugin, Web, Prediction, Recon, and similar servers.

- Database (DB).

- Pandora FMS console.

Data Server High Availability

- For the Pandora FMS data server, two machines need to be set up with a configured Pandora FMS data server (and different hostname and server name).

- A Tentacle server must be configured on each of them.

- Each machine will have a different IP address.

- If an external load balancer is to be used, it will provide a single IP address to which the agents will connect to send their data.

- In the case of using the agents' HA mechanism, there will be a slight delay in sending data, since with each execution of the agent, it will try to connect to the primary server and, if it does not answer, it will do so against the secondary (if it has been configured that way).

If you want to use two data servers and have both handle policies, collections, and remote configurations, you will have to share the key directories so that all instances of the data server can read and write to those directories. The consoles must also have access to these shared directories.

/var/spool/pandora/data_in/conf/var/spool/pandora/data_in/collections/var/spool/pandora/data_in/md5/var/spool/pandora/data_in/netflow/var/www/html/pandora_console/attachment/var/spool/pandora/data_in/discovery

It is important to only share the subdirectories within data_in and not data_in itself as it would negatively affect server performance.

High availability in EndPoints

From the EndPoints it is possible to load balance data servers since it is possible to configure a master (primary) data server and a backup (operational backup) one. In the EndPoint configuration file pandora_agent.conf the following part of the agent configuration file must be configured and uncommented:

- secondary_server_ip: Secondary server IP address.

- secondary_server_path: Path where the XMLs are copied on the secondary server, usually

/var/spool/pandora/data_in. - secondary_server_port: Port through which the XMLs will be copied to the secondary server, in Tentacle 41121, in SSH port 22 and in FTP 21.

- secondary_transfer_mode: Transfer mode that will be used to copy the XMLs to the secondary server, Tentacle, SSH, FTP.

- secondary_server_pwd: Password option for FTP transfer.

- secondary_server_ssl: It will be set to

yesornodepending on whether you want to use SSL to transfer the data via Tentacle. - secondary_server_opts: Other necessary options for the transfer will be placed in this field.

- secondary_mode: Mode the secondary server must be in. It can have two values:

- on_error: Sends data to the secondary server only if it cannot send it to the primary.

- always: Always sends data to the secondary server, regardless of whether it can contact the primary server or not.

The remote configuration of the EndPoint is only operational, if enabled, on the primary server.

High availability of Network, WMI, plugin, web, prediction and similar servers

You need to install multiple servers: network server, WMI server, Plugin server, Web or prediction server, on several machines in the network (all with the same visibility towards the systems to be monitored) and all must be in the same segment (so that the network latency data is consistent).

Servers can be marked as primary. Those servers will automatically pick up the data from all modules assigned to a server that is marked as “down”. The Pandora FMS servers themselves implement a mechanism to detect that one of them has gone down through a check of its last contact date (server threshold x 2). It is enough for a single Pandora FMS server to be active so it can detect the downtime of the rest.

The obvious way to implement HA and load balancing in a two-node system is to assign 50% of the modules to each server and mark both servers as primary (master). In the case of having more than two primary servers and a third down server with modules pending execution, the first of the primary servers to execute the module “self-assigns” the module of the down server. In case of recovery of one of the down servers, the modules that had been assigned to the primary server are automatically reassigned.

Configuration in PFMS servers

A Pandora FMS server can be running in two different modes:

- Master mode (primary mode or

MASTERmode). - Non-master mode.

If a server “goes down” (is offline), its modules will be executed by the master server so that no data is lost.

At any given time there can only be one master server which is chosen from the servers that have the configuration option master in /etc/pandora/pandora_server.conf with a value greater than 0:

master [1..7]

If the current master server goes down, a new master is chosen. If there is more than one candidate, the one with a higher value in master is chosen.

Care should be taken when disabling servers. If a server with network modules goes down and the Network Server of the master server is disabled, those modules will not be executed.

The following parameters have been introduced into pandora_server.conf:

ha_file: Address of the temporary HA binary file.ha_pid_file: Current HA process.pandora_service_cmd: Pandora FMS service status control.

Add PFMS servers to an HA DB cluster

If you have Database High Availability, some extra configurations are needed to connect more Pandora FMS servers to the MySQL cluster. In the pandora_server.conf file (located by default in /etc/pandora) of each of the independent Pandora FMS servers to be added, the following parameters must be configured:

dbuser: Must have the username for accessing the MySQL cluster. For example:

- /etc/pandora/pandora_server.conf

dbuser pandora

dbpass: Must contain the password of the user accessing the MySQL cluster. For example:

- /etc/pandora/pandora_server.conf

dbpass pandora

ha_hosts: Theha_hostparameter must be configured followed by the IP addresses or FQDNs of the MySQL servers that make up the HA environment. Example:

ha_hosts 192.168.80.170,192.168.80.172

Pandora FMS console high availability

You just have to install another web console pointing to the database. Any of the consoles can be used simultaneously from different locations by different users. A web load balancer can be used in front of the consoles in case horizontal growth is needed for load balancing on the console. The session system is managed by cookies and these are stored in the browser.

In case of using remote configuration and to manage it from all consoles, both the data servers and the consoles must share the input data directory (by default: /var/spool/pandora/data_in) for the remote configuration of the agents, collections and other directories (see topic "Security architecture").

/var/spool/pandora/data_in/conf/var/spool/pandora/data_in/collections/var/spool/pandora/data_in/md5/var/spool/pandora/data_in/netflow/var/www/html/pandora_console/attachment/var/spool/pandora/data_in/discovery

It is important to only share the subdirectories within data_in and not data_in itself as it would negatively affect server performance.

Update

When updating the Pandora FMS console in a High Availability environment, it is important to take into account the following considerations when updating via OUM through Management → Warp update → Update offline. The OUM package can be downloaded from the Pandora FMS support website.

Being in a load-balanced environment with a shared database, updating the first console applies the corresponding changes to the database. This causes that when updating the second console, Pandora FMS displays an error when finding the information already inserted in the database. However, the console update will be completed anyway.

Database High Availability

The objective of this section is to offer a complete HA solution for Pandora FMS environments. This is the only officially supported HA model for Pandora FMS, and is provided as of version 770. This system replaces the cluster configuration with Corosync and Pacemaker from previous versions.

The new Pandora FMS HA solution is integrated into the product (within the pandora_ha binary). It implements an HA that supports geographically isolated sites, with different IP address ranges, which cannot be done with Corosync/Pacemaker.

In the new HA model, the usual configuration is in pairs, so the design does not implement a quorum system and simplifies the configuration and resources needed. This way the monitoring system will work as long as an available DB node exists, and in the event of a DB Split-Brain, the system will run in parallel until both nodes are merged again.

As of version 772, the HA was changed to be simpler and have fewer failures. For this new HA it is advisable to use SSD disks for a higher read/write speed (IOPS), minimum 500 Mb/s (or more, depending on the environment). The latency between servers must also be taken into account since with very high latencies it is difficult to synchronize both DBs in time.

With the new proposal, the aim is to solve the three current problems:

- Complexity and maintenance of the current system (up to NG version 770).

- Possibility of having an HA environment distributed in different geographical locations with a non-local network segmentation.

- Data recovery procedure in case of Split-Brain and guaranteed functioning of the system in case of communication breakdown between both geographically separated sites.

The new HA system for DB is implemented on Percona 8, although in successive versions it will be detailed how to do it also in MySQL/MariaDB 8.

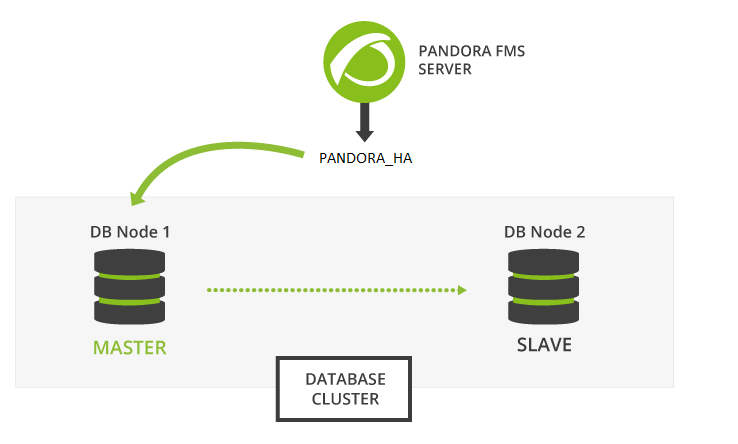

Pandora FMS is based on a MySQL database to configure and store data, so a database failure can temporarily paralyze the monitoring tool. The Pandora FMS high availability database cluster allows you to easily deploy a robust and fault-tolerant architecture.

This is an advanced feature that requires knowledge in GNU/Linux systems. It is important that all servers have their time synchronized with an NTP server (chronyd service in Rocky Linux 8).

The binary replication MySQL cluster nodes are managed with the pandora_ha binary, starting with version 770. Percona was chosen as the default RDBMS for its scalability, availability, security and backup features.

Active/passive replication is developed from a single master node (with write permission) to any number of secondaries (read-only). If the master node fails, one of the secondaries promotes to master and pandora_ha takes care of updating the IP address of the master node.

The environment will consist of the following elements:

- MySQL8 servers with binary replication enabled (Active - Passive).

- Server with

pandora_hawith the configuration of all MySQL servers to carry out continuous monitoring and perform the necessary slave-master and master-slave promotions for the correct functioning of the cluster.

Percona 8 Installation

Version 770 or later.

Percona 8 Installation for RHEL 8 and Rocky Linux 8

First of all, it is necessary to have the Percona repository installed on all nodes in the environment to be able to later install the Percona server packages.

A terminal window with root rights or as root user must be opened. You are solely responsible for such password. The following instructions indicate whether you should execute instructions on all devices, on some, or on one in particular, pay attention to the statements.

Execute on all involved devices:

yum install -y https://repo.percona.com/yum/percona-release-latest.noarch.rpm

Enable version 8 of the Percona repository on all devices:

percona-release setup ps80

Install the Percona server along with the backup tool with which backups will be made for the manual synchronization of both environments. Execute on all involved devices:

yum install percona-server-server percona-xtrabackup-80

In case the Percona server is installed along with the web Console and the PFMS server, you can use the deploy indicating the MySQL 8 version using the MYVER=80 parameter:

curl -Ls https://pfms.me/deploy-pandora-el8 | env MYVER=80 bash

Percona 8 Installation on Ubuntu Server

Install the Percona repository on all devices:

curl -O https://repo.percona.com/apt/percona-release_latest.generic_all.deb apt install -y gnupg2 lsb-release ./percona-release_latest.generic_all.deb

Enable version 8 of the Percona repository on all devices:

percona-release setup ps80

Install the Percona server along with the backup tool with which the backups will be made for the manual synchronization of both environments. On all devices execute:

apt install -y percona-server-server percona-xtrabackup-80

Binary replication configuration

Requirements

User permissions

The user that must perform the steps in this guide on both HA nodes is the system root user, so always check that the corresponding privilege escalation has been performed.

Connectivity

Between the nodes that will form the HA, there must be connectivity via SSH (port number 22) and also between their MySQL servers (3306).

Likewise, it is recommended to use descriptive hostnames and DNS resolution between the machines.

Shared directories

If you want to use two data servers and have both handle policies, collections, and remote configurations, you will have to share the key directories so that all instances of the Data Server can read and write to those directories. Consoles must also have access to these shared directories. For example, sharing them via NFS:

/var/spool/pandora/data_in/conf /var/spool/pandora/data_in/collections /var/spool/pandora/data_in/md5 /var/spool/pandora/data_in/netflow /var/www/html/pandora_console/attachment /var/spool/pandora/data_in/discovery

SSH Trust Configuration

On both nodes

MOTD and Banners cleanup.

On Rocky Linux 9:

[ -f /etc/cron.hourly/motd_rebuild ] && rm -f /etc/cron.hourly/motd_rebuild cp -a /etc/ssh/sshd_config{,.bak-$(date +%F)} sed -i -e 's/^Banner.*/#Banner none/g' /etc/ssh/sshd_config sed -i 's/^\s*session\s\+optional\s\+pam_motd\.so/#&/' /etc/pam.d/sshd systemctl restart sshd

On Ubuntu 22.04:

cp -a /etc/pam.d/sshd{,.bak-$(date +%F)} sed -i 's/^session\s\+optional\s\+pam_motd.so/# &/' /etc/pam.d/sshd chmod -x /etc/update-motd.d/* systemctl restart sshd

a) RSA key generation without passphrase:

printf "\n\n\n" | ssh-keygen -t rsa -P ''

b) Identity copy (Bidirectional and Reflexive):

ssh-copy-id -p22 root@pandoraha1 ssh-copy-id -p22 root@pandoraha2

c) Check that it does not ask for a pass on the SSH connection (Bidirectional and Reflexive):

ssh pandoraha1 ssh pandoraha2

DB Replication

User creation

IMPORTANT: This step is performed only on the MASTER node and before modifying the my.cnf file.

Access the MySQL console:

mysql -u root -p pandora # Password by default: Pandor4!

Run the following SQL queries:

UNINSTALL COMPONENT 'file://component_validate_password'; CREATE USER replicationuser@'%' IDENTIFIED WITH mysql_native_password BY 'pandora'; GRANT REPLICATION CLIENT, REPLICATION SLAVE ON *.* TO replicationuser@'%'; CREATE USER root@'%' IDENTIFIED WITH mysql_native_password BY 'Pandor4!'; GRANT ALL PRIVILEGES ON *.* TO root@'%'; CREATE USER pandora@'%' IDENTIFIED WITH mysql_native_password BY 'pandora'; GRANT ALL PRIVILEGES ON pandora.* TO pandora@'%'; FLUSH PRIVILEGES; EXIT;

Configuration of /etc/my.cnf

Binary replication configuration

It is recommended to store the binlogs generated by the replication in an additional partition or external disk; its size should be the same as the one reserved for the database. See also the log-bin and log-bin-index tokens.

Once the MySQL server is installed on all nodes in the cluster, proceed to perform the configuration in both environments to have them replicated.

First of all, you must configure the my.cnf configuration file, preparing it for binary replication to work properly.

Node 1

Node 1 /etc/my.cnf (/etc/mysql/my.cnf on Ubuntu server):

[mysqld] server_id=1 # It is important that it is different in all nodes. datadir=/var/lib/mysql socket=/var/lib/mysql/mysql.sock log-error=/var/log/mysqld.log pid-file=/var/run/mysqld/mysqld.pid # OPTIMIZATION FOR PANDORA FMS innodb_buffer_pool_size = 4096M innodb_lock_wait_timeout = 90 innodb_file_per_table innodb_flush_method = O_DIRECT innodb_log_file_size = 64M innodb_log_buffer_size = 16M thread_cache_size = 8 max_connections = 200 key_buffer_size=4M read_buffer_size=128K read_rnd_buffer_size=128K sort_buffer_size=128K join_buffer_size=4M sql_mode="" # SPECIFIC PARAMETERS FOR BINARY REPLICATION binlog-do-db=pandora replicate-do-db=pandora max_binlog_size = 100M binlog-format=ROW binlog_expire_logs_seconds=172800 # 2 DAYS #log-bin=/path # Uncomment for adding an additional storage path for binlogs and setting a new path #log-bin-index=/path/archive.index # Uncomment for adding an additional storage path for binlogs and setting a new path sync_source_info=1 sync_binlog=1 port=3306 report-port=3306 report-host=master gtid-mode=off enforce-gtid-consistency=off master-info-repository=TABLE relay-log-info-repository=TABLE sync_relay_log = 0 replica_compressed_protocol = 1 replica_parallel_workers = 1 innodb_flush_log_at_trx_commit = 2 innodb_flush_log_at_timeout = 1800 [client] user=root password=pandora

- The tokens following the OPTIMIZATION FOR PANDORA FMS comment perform the optimized configuration for Pandora FMS.

- After the SPECIFIC PARAMETERS FOR BINARY REPLICATION comment, the specific parameters for binary replication are configured.

- The token called

binlog_expire_logs_secondsis set for a period of two days. - In the

[client]subsection, enter the user and password used for the database; by default, when installing PFMS they arerootandpandorarespectively. These values are necessary for making backups without specifying a user (automated).

It is important that the server_id token is different on all nodes; in this example, that number is used for node 1.

Node 2

Node 2 /etc/my.cnf (/etc/mysql/my.cnf on Ubuntu server ):

[mysqld] server_id=2 # It is important that it is different in all nodes. datadir=/var/lib/mysql socket=/var/lib/mysql/mysql.sock log-error=/var/log/mysqld.log pid-file=/var/run/mysqld/mysqld.pid # OPTIMIZATION FOR PANDORA FMS innodb_buffer_pool_size = 4096M innodb_lock_wait_timeout = 90 innodb_file_per_table innodb_flush_method = O_DIRECT innodb_log_file_size = 64M innodb_log_buffer_size = 16M thread_cache_size = 8 max_connections = 200 key_buffer_size=4M read_buffer_size=128K read_rnd_buffer_size=128K sort_buffer_size=128K join_buffer_size=4M sql_mode="" # SPECIFIC PARAMETERS FOR BINARY REPLICATION binlog-do-db=pandora replicate-do-db=pandora max_binlog_size = 100M binlog-format=ROW binlog_expire_logs_seconds=172800 # 2 DAYS #log-bin=/path # Uncomment for adding an additional storage path for binlogs and setting a new path #log-bin-index=/path/archive.index # Uncomment for adding an additional storage path for binlogs and setting a new path sync_source_info=1 sync_binlog=1 port=3306 report-port=3306 report-host=master gtid-mode=off enforce-gtid-consistency=off master-info-repository=TABLE relay-log-info-repository=TABLE sync_relay_log = 0 replica_compressed_protocol = 1 replica_parallel_workers = 1 innodb_flush_log_at_trx_commit = 2 innodb_flush_log_at_timeout = 1800 [client] user=root password=pandora

- The tokens following the OPTIMIZATION FOR PANDORA FMS comment perform the optimized configuration for Pandora FMS.

- After the SPECIFIC PARAMETERS FOR BINARY REPLICATION comment, the specific parameters for binary replication are configured.

- The token called

binlog_expire_logs_secondsis set for a period of two days. - In the

[client]subsection, enter the user and password used for the database; by default, when installing PFMS they arerootandpandorarespectively. These values are necessary for making backups without specifying a user (automated).

It is important that the server_id token is different on all nodes; in this example, that corresponding number is used for node 2.

It is necessary to restart MySQL for the changes to take effect:

Run on both nodes

On Rocky Linux 9:

systemctl restart mysqld

On Ubuntu Server 22.04:

systemctl restart mysql

DB Cloning

Database cloning: The next step consists of cloning the master database (MASTER) onto the slave node (SLAVE). To do this, follow these steps:

1.- Perform a full download (dump) of the MASTER database:

MASTER #

xtrabackup --backup --target-dir=/root/pandoradb.bak/

MASTER #

xtrabackup --prepare --target-dir=/root/pandoradb.bak/

2.- Get the position of the binary log from the backup:

MASTER # cat /root/pandoradb.bak/xtrabackup_binlog_info

It will return something like the following:

binlog.000003 157

Take note of these two values as they are required for step number 6.

Stop MySQL service on the Slave Server:

On Rocky Linux 9:

systemctl stop mysqld

On Ubuntu Server 22.04:

systemctl stop mysql

Delete ALL content (Delete default DB):

rm -rf /var/lib/mysql/*

To avoid problems, we will also stop the pandora_ha service on both nodes.

systemctl stop pandora_ha

3.- Make a copy using rsync with the SLAVE server to send the performed backup:

MASTER # rsync -avpP -e ssh /root/pandoradb.bak/ pandoraha2:/var/lib/mysql/

4.- On the SLAVE server, configure the permissions so that the MySQL server can seamlessly access the sent files:

SLAVE # chown -R mysql:mysql /var/lib/mysql

SLAVE # chcon -R system_u:object_r:mysqld_db_t:s0 /var/lib/mysql

5.- Start the mysqld service on the SLAVE server:

systemctl start mysqld

6.- Start the SLAVE mode on this server (use the data noted down in step 2):

SLAVE # mysql -u root -ppandora

SLAVE # mysql > reset slave all;

SLAVE # mysql >

CHANGE MASTER TO MASTER_HOST='pandoraha1', MASTER_USER='replicationuser', MASTER_PASSWORD='pandora', MASTER_LOG_FILE='binlog.000003', MASTER_LOG_POS=157;

SLAVE # mysql > start slave;

SLAVE # mysql > SET GLOBAL read_only=1;

Once you have finished with all these steps, if you run the show slave status command inside the MySQL shell you will notice that the node is in slave mode. If set up correctly, an output similar to the following should be seen:

*************************** 1. row *************************** Slave_IO_State: Waiting for source to send event Master_Host: pandoraha1 Master_User: root Master_Port: 3306 Connect_Retry: 60 Master_Log_File: binlog.000018 Read_Master_Log_Pos: 1135140 Relay_Log_File: relay-bin.000002 Relay_Log_Pos: 1135306 Relay_Master_Log_File: binlog.000018 Slave_IO_Running: Yes Slave_SQL_Running: Yes Replicate_Do_DB: pandora Replicate_Ignore_DB: Replicate_Do_Table: Replicate_Ignore_Table: Replicate_Wild_Do_Table: Replicate_Wild_Ignore_Table: Last_Errno: 0 Last_Error: Skip_Counter: 0 Exec_Master_Log_Pos: 1135140 Relay_Log_Space: 1135519 Until_Condition: None Until_Log_File: Until_Log_Pos: 0 Master_SSL_Allowed: No Master_SSL_CA_File: Master_SSL_CA_Path: Master_SSL_Cert: Master_SSL_Cipher: Master_SSL_Key: Seconds_Behind_Master: 0 Master_SSL_Verify_Server_Cert: No Last_IO_Errno: 0 Last_IO_Error: Last_SQL_Errno: 0 Last_SQL_Error: Replicate_Ignore_Server_Ids: Master_Server_Id: 1 Master_UUID: fa99f1d6-b76a-11ed-9bc1-000c29cbc108 Master_Info_File: mysql.slave_master_info SQL_Delay: 0 SQL_Remaining_Delay: NULL Slave_SQL_Running_State: Replica has read all relay log; waiting for more updates Master_Retry_Count: 86400 Master_Bind: Last_IO_Error_Timestamp: Last_SQL_Error_Timestamp: Master_SSL_Crl: Master_SSL_Crlpath: Retrieved_Gtid_Set: Executed_Gtid_Set: Auto_Position: 0 Replicate_Rewrite_DB: Channel_Name: Master_TLS_Version: Master_public_key_path: Get_master_public_key: 0 Network_Namespace: 1 row in set, 1 warning (0,00 sec)

If MySQL 8.4 is used, the commands change slightly:

sudo mysql -u root

CHANGE REPLICATION SOURCE TO SOURCE_HOST='pandoraha1', -- Enter the IP or dns address of your MASTER MySQL server SOURCE_USER='replicationuser', -- Enter the replication username SOURCE_PASSWORD='pandora', -- Enter the replication password SOURCE_LOG_FILE='binlog.000003', -- Update to the values retrieved on previous step SOURCE_LOG_POS=157 -- Update to the values retrieved on previous step ;

We start the replica and verify that everything is correct:

STOP REPLICA; START REPLICA; SHOW REPLICA STATUS\G

We verify that the replica is correct by running the show slave status command and confirming the output:

Replica_IO_Running: Yes

Replica_SQL_Running: Yes

Both must be set to Yes.

From this moment on, you could assume that binary replication is enabled and running correctly.

Pandora FMS Configuration

Adjustments on Pandora FMS Server

Version 770 or later.

It is necessary to configure within the pandora_server.conf file a series of parameters needed for the proper functioning of pandora_ha.

The parameters to be added are the following:

- ha_hosts <IP_ADDRESS1>,<IP_ADDRESS2>:

The ha_host parameter must be configured followed by the IP addresses or FQDN of the MySQL servers that make up the HA environment. The IP address placed first will have preference to be the MASTER server or at least it has the master role when the HA environment is started for the first time. Example:

ha_hosts 192.168.80.170,192.168.80.172

- ha_dbuser and ha_dbpass:

These are the parameters where the root user and password must be indicated or, failing that, a MySQL user with the maximum privileges that will oversee performing all master - slave promotion operations on the nodes. Example:

ha_dbuser root ha_dbpass pandora

- repl_dbuser and repl_dbpass:

Parameters to define the replication user that the SLAVE will use to connect to the MASTER. Example:

repl_dbuser replicationuser repl_dbpass pandora

- ha_sshuser and ha_sshport:

Parameters to define the user/port with which to connect via ssh to the Percona/MySQL servers to perform recovery operations. For this option to work properly, it is necessary to share the ssh keys between the user running the pandora_ha service and the user specified in the ha_sshuser parameter. Example:

ha_sshuser root ha_sshport 22

- ha_resync

PATH_SCRIPT_RESYNC:

By default, the script to resynchronize the nodes is located at:

/usr/share/pandora_server/util/pandora_ha_resync_slave.sh

If having a custom installation of the script, indicate its location in this parameter so that the automatic or manual synchronization of the SLAVE node is carried out when needed.

ha_resync /usr/share/pandora_server/util/pandora_ha_resync_slave.sh

- ha_resync_log:

Path of the log where all the information related to the executions performed by the script configured in the previous token will be saved. Example:

ha_resync_log /var/log/pandoraha_resync.log

- ha_connect_retries:

Number of retries that it will perform in each check with each of the HA environment servers before making any changes in the environment. Example:

ha_connect_retries 2

Once all these parameters are configured, you can start the Pandora FMS server with the pandora_ha service. The server will obtain an image of the environment and will know at that moment which one is the MASTER server.

When it knows it, it will create the pandora_ha_hosts.conf file in the /var/spool/pandora/data_in/conf/ folder, where it will indicate at all times the Percona/MySQL server that has the MASTER role.

In the event that the incomingdir parameter of the pandora_server.conf file contains a different path (PATH), this file will be located in that PATH.

This file will be used as an exchange with the Pandora FMS Console so that it knows at all times the IP address of the Percona/MySQL server with the MASTER role.

- restart:

It will be indicated with a value of 0, since the pandora_ha daemon is in charge of restarting the service in case of failure and thus possible conflicts are avoided. Example:

# Pandora FMS will restart after restart_delay seconds on critical errors. restart 0

- ha_backup_source_dir:

Directory that will contain the HA backup data. Default value: /var/tmp.

- ha_mysql_source_dir:

Directory that contains the MySQL data. Default value: /var/lib.

Once the modifications are made in the configuration file, we start pandora_ha on both nodes:

systemctl start pandora_ha

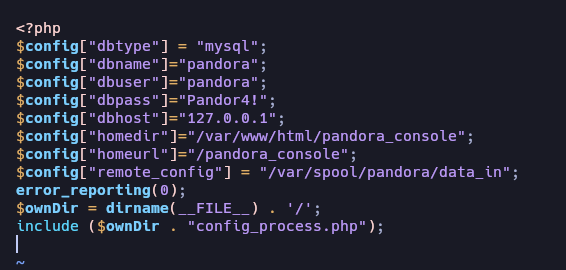

Adjustments in Pandora FMS Console

The Console's config.php file located at

/var/www/html/pandora_console/include/

must be configured, adding the following value:

$config["remote_config"] = "/var/spool/pandora/data_in";

Looking as follows:

With this, Pandora FMS will read the file

/var/spool/pandora/data_in/conf/pandora_ha_hosts.conf

from where it will get the IP address to make the connection.

Pandora FMS Console Configuration

Version 770 or later.

A new parameter has been added to the config.php configuration indicating the path of the exchange directory used by Pandora FMS by default /var/spool/pandora/data_in.

If configured, it will look for the file /var/spool/pandora/data_in/conf/pandora_ha_hosts.conf from where it will obtain the IP address to make the connection.

$config["remote_config"] = "/var/spool/pandora/data_in";

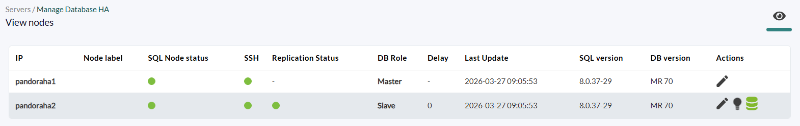

In the Pandora FMS console you can view the cluster status by accessing the Manage HA view.

https://PANDORA_IP/pandora_console/index.php?sec=gservers&sec2=enterprise/godmode/servers/HA_cluster

The data in this view are constantly updated thanks to pandora_ha; no previous configuration procedure needs to be done to be able to view this section as long as pandora_server.conf is correctly configured with the parameters mentioned in the previous section.

Among the available actions, you can configure a label for each node and you can perform the option to synchronize the SLAVE node using the icon![]() .

.

This icon can have the following statuses:

- Green: Normal, no operation to perform.

- Blue: Pending resynchronization.

- Yellow: Resynchronization is being performed.

- Red: Error, resynchronization failed.

HA View

Operation verification

Once the steps described above have been followed, we can check the cluster status by accessing the HA management view:

https://PANDORA_IP/pandora_console/index.php?sec=gservers&sec2=enterprise/godmode/servers/new_HA_cluster

In it, we will see information about the connection and replication status:

- IP: IP addresses of the nodes forming the cluster.

- Node label: Identifying labels that can be configured for the nodes.

- SQL Node status: MySQL service status.

- SSH: SSH connection status.

- Replication Status: Replication status.

- DB Role: The roles in the nodes forming the cluster to identify the Master.

- Delay: Delay time or how long it takes the non-Master node to receive DB changes.

- Last Update: Time of the last update.

- SQL version: Installed MySQL version.

- DB version: Installed version of the schema in the PandoraFMS DB.

Available actions

In the last column of the HA view, we have different actions we can run:

Used to edit the Node label field.

Used to edit the Node label field. Used to disable/enable the slave node.

Used to disable/enable the slave node. Used to launch resynchronization from the console and can have the following statuses:

Used to launch resynchronization from the console and can have the following statuses:

- Green: Normal, no operation to perform.

- Blue: Pending resynchronization.

- Yellow: Resynchronization is being performed.

- Red: Error, resynchronization failed.

Manual DB resynchronization

Using the synchronization script: With the Pandora FMS server, a script is implemented that allows you to synchronize the SLAVE database in case it is desynchronized.

The manual execution of this script is as follows:

./pandora_ha_resync_slave.sh "pandora_server.conf file" MASTER SLAVE

For example, to manually synchronize node 1 to node 2, the execution would be as follows:

/usr/share/pandora_server/util/pandora_ha_resync_slave.sh \ /etc/pandora/pandora_server.conf \ pandoraha1 \ pandoraha2

To configure the automatic recovery of the HA environment when there is any synchronization problem between MASTER and SLAVE, it is necessary to have the splitbrain_autofix configuration token set to 1, within the server configuration file (/etc/pandora/pandora_server.conf).

Thus, whenever a Split-Brain occurs (both servers have the master role) or there is a synchronization problem between the MASTER and SLAVE node, pandora_ha will try to launch the pandora_ha_resync_slave.sh script to synchronize the MASTER server status on the SLAVE server from that moment on.

This process will generate events in the system indicating the start, the end and if any error has occurred within it.

Corosync-Pacemaker HA Environments Migration

The main difference between an HA environment used in MySQL/Percona Server version 5 with the current HA mode is that now pandora_ha is used to manage the nodes of the cluster instead of Corosync-Pacemaker, which will no longer be used from now on.

The environment migration will consist of:

1.- Update Percona from version 5.7 to version 8.0: “MySQL 8 Installation and Upgrade”.

2.- Install xtrabackup-80 on all devices:

yum install percona-xtrabackup-80

If Ubuntu server is used, see the section “Percona 8 Installation for Ubuntu Server”.

3.- Create all users again with the mysql_native_password token on the MASTER node.

In the following instructions, the mysql_native_password authentication method is used. To use caching_sha2_password, see the topic “SHA Authentication Method Configuration in MySQL”.

mysql > CREATE USER replicationuser@% IDENTIFIED WITH mysql_native_password BY 'pandora';

mysql > GRANT REPLICATION CLIENT, REPLICATION SLAVE on *.* to replicationuser@%;

mysql > CREATE USER pandora@% IDENTIFIED WITH mysql_native_password BY 'pandora';

mysql > grant all privileges on pandora.* to pandora@%;

4.- Dump the database from the MASTER node to the SLAVE:

4.1.- Perform full dump of the MASTER database:

MASTER #

xtrabackup --backup --target-dir=/root/pandoradb.bak/

MASTER #

xtrabackup --prepare --target-dir=/root/pandoradb.bak/

4.2.- Get the position of the binary log from the backup:

MASTER # cat /root/pandoradb.bak/xtrabackup_binlog_info

binlog.000003 157

Take note of these two values as they are needed in step 4.6.

4.3.- Perform a synchronization with rsync to the SLAVE server to send the performed backup.

SLAVE # rm -rf /var/lib/mysql/*

MASTER # rsync -avpP -e ssh /root/pandoradb.bak/ pandoraha2:/var/lib/mysql/

4.4- On the SLAVE server, configure permissions so that the MySQL server can seamlessly access the sent files.

SLAVE # chown -R mysql:mysql /var/lib/mysql

SLAVE # chcon -R system_u:object_r:mysqld_db_t:s0 /var/lib/mysql

4.5.- Start the mysqld service on the SLAVE server.

systemctl start mysqld

4.6.- Start the SLAVE mode on this server (use the data from step 4.2):

SLAVE # mysql -u root -ppandora

SLAVE # mysql > reset slave all;

SLAVE # mysql >

CHANGE MASTER TO MASTER_HOST='pandoraha1', MASTER_USER='replicationuser', MASTER_PASSWORD='pandora', MASTER_LOG_FILE='binlog.000003', MASTER_LOG_POS=157;

SLAVE # mysql > start slave;

SLAVE # mysql > SET GLOBAL read_only=1;

In case you want to install the environment from scratch on a new server, the migration procedure simply involves installing from scratch as indicated by the current procedure in the new environment, and in the step of creating the Pandora FMS database, the data must be imported with a backup of the database from the old environment.

In turn, it will be necessary to save the Pandora FMS Console and Server configuration indicated in previous sections in the new environment.

Split-Brain

Due to several factors, high latencies, network outages, etc., it could happen that both MySQL servers have acquired the master role and we do not have the autoresync option enabled in pandora_ha so that the server itself chooses the server that will act as master and performs the synchronization from the master node to the slave, thus losing all the information that might be being collected on that server.

To solve this problem, you can merge the data following this procedure.

This manual procedure only covers data and event recovery between two dates. It assumes that it only recovers data from agents/modules that already exist on the node where a data merging is to be performed.

If new agents are created during the Split-Brain time, or new configuration information (alerts, policies, etc.), these will not be taken into account. Only data and events will be recovered. That is, the data related to the tables tagente_datos, tagente_datos_string and tevento.

The following commands will be executed on the node that was disconnected (the one that will be promoted to SLAVE), where yyyy-mm-dd hh:mm:ss is the Split-Brain start date and time and yyyy2-mm2-dd2 hh2:mm2:ss2 is its end date and time.

Run the mysqldump command with appropriate user rights to get a data download (data dump or simply dump):

mysqldump -u root -p -n -t --skip-create-options --databases pandora --tables tagente_datos --where='FROM_UNIXTIME(utimestamp)> "yyyy-mm-dd hh:mm:ss" AND FROM_UNIXTIME(utimestamp) <"yyyy2-mm2-dd2 hh2:mm2:ss2"'> tagente_datos.dump.sql

mysqldump -u root -p -n -t --skip-create-options --databases pandora --tables tagente_datos_string --where='FROM_UNIXTIME(utimestamp)> "yyyy-mm-dd hh:mm:ss" AND FROM_UNIXTIME(utimestamp) <"yyyy2-mm2-dd2 hh2:mm2:ss2"'> tagente_datos_string.dump.sql

mysqldump -u root -p -n -t --skip-create-options --databases pandora --tables tevento --where='FROM_UNIXTIME(utimestamp)> "yyyy-mm-dd hh:mm:ss" AND FROM_UNIXTIME(utimestamp) <"yyyy2-mm2-dd2 hh2:mm2:ss2"' | sed -e "s/([0-9]*,/(NULL,/gi"> tevento.dump.sql

Once the dumps of those tables are obtained, this data will be loaded into the MASTER node:

MASTER # cat tagente_datos.dump.sql | mysql -u root -p pandora

MASTER # cat tagente_datos_string.dump.sql | mysql -u root -p pandora

MASTER # cat tagente_evento.dump.sql | mysql -u root -p pandora

After loading the data recovered from the node to be promoted to SLAVE, it will be synchronized using the following procedure:

0.- Delete the /root/pandoradb.bak/ directory on the MASTER.

1.- Perform a full dump of the Master DB:

MASTER #

xtrabackup --backup --target-dir=/root/pandoradb.bak/

MASTER #

xtrabackup --prepare --target-dir=/root/pandoradb.bak/

2.- Get the binary log position of the backed up data (backup):

MASTER # cat /root/pandoradb.bak/xtrabackup_binlog_info

You will get something similar to the following (take due note of these values):

binlog.000003 157

At this point on SLAVE, the contents of /var/lib/mysql/ must be cleaned as indicated there in step 4.3:

SLAVE # rm -rf /var/lib/mysql/*

3.- Run a task with the rsync command to the slave server to send the performed backup.

MASTER # rsync -avpP -e ssh /root/pandoradb.bak/ pandoraha2:/var/lib/mysql/

4.- On the slave server, configure permissions so that the MySQL server can seamlessly access the sent files.

SLAVE # chown -R mysql:mysql /var/lib/mysql

SLAVE # chcon -R system_u:object_r:mysqld_db_t:s0 /var/lib/mysql

5.- We start the mysqld service on the slave server.

systemctl start mysqld

6.- Start slave mode on this server.

SLAVE # mysql -u root -ppandora

SLAVE # mysql > reset slave all;

SLAVE # mysql > CHANGE MASTER TO MASTER_HOST='pandoraha1', MASTER_USER='replicationuser', MASTER_PASSWORD='pandora', MASTER_LOG_FILE='binlog.000003', MASTER_LOG_POS=157;

SLAVE # mysql > start slave;

SLAVE # mysql > SET GLOBAL read_only=1;

Once all these steps are finished, if we run the show slave status command inside the MySQL shell, you will notice that the node is in slave mode. If set up correctly, an output like the following example should be seen:

*************************** 1. row ***************************

Slave_IO_State: Waiting for source to send event

Master_Host: pandoraha1

Master_User: root

Master_Port: 3306

Connect_Retry: 60

Master_Log_File: binlog.000018

Read_Master_Log_Pos: 1135140

Relay_Log_File: relay-bin.000002

Relay_Log_Pos: 1135306

Relay_Master_Log_File: binlog.000018

Slave_IO_Running: Yes

Slave_SQL_Running: Yes

Replicate_Do_DB: pandora

Replicate_Ignore_DB:

Replicate_Do_Table:

Replicate_Ignore_Table:

Replicate_Wild_Do_Table:

Replicate_Wild_Ignore_Table:

Last_Errno: 0

Last_Error:

Skip_Counter: 0

Exec_Master_Log_Pos: 1135140

Relay_Log_Space: 1135519

Until_Condition: None

Until_Log_File:

Until_Log_Pos: 0

Master_SSL_Allowed: No

Master_SSL_CA_File:

Master_SSL_CA_Path:

Master_SSL_Cert:

Master_SSL_Cipher:

Master_SSL_Key:

Seconds_Behind_Master: 0

Master_SSL_Verify_Server_Cert: No

Last_IO_Errno: 0

Last_IO_Error:

Last_SQL_Errno: 0

Last_SQL_Error:

Replicate_Ignore_Server_Ids:

Master_Server_Id: 1

Master_UUID: fa99f1d6-b76a-11ed-9bc1-000c29cbc108

Master_Info_File: mysql.slave_master_info

SQL_Delay: 0

SQL_Remaining_Delay: NULL

Slave_SQL_Running_State: Replica has read all relay log; waiting for more updates

Master_Retry_Count: 86400

Master_Bind:

Last_IO_Error_Timestamp:

Last_SQL_Error_Timestamp:

Master_SSL_Crl:

Master_SSL_Crlpath:

Retrieved_Gtid_Set:

Executed_Gtid_Set:

Auto_Position: 0

Replicate_Rewrite_DB:

Channel_Name:

Master_TLS_Version:

Master_public_key_path:

Get_master_public_key: 0

Network_Namespace:

1 row in set, 1 warning (0,00 sec)

From this moment on, you could assume that binary replication is enabled and running correctly again.

Home

Home